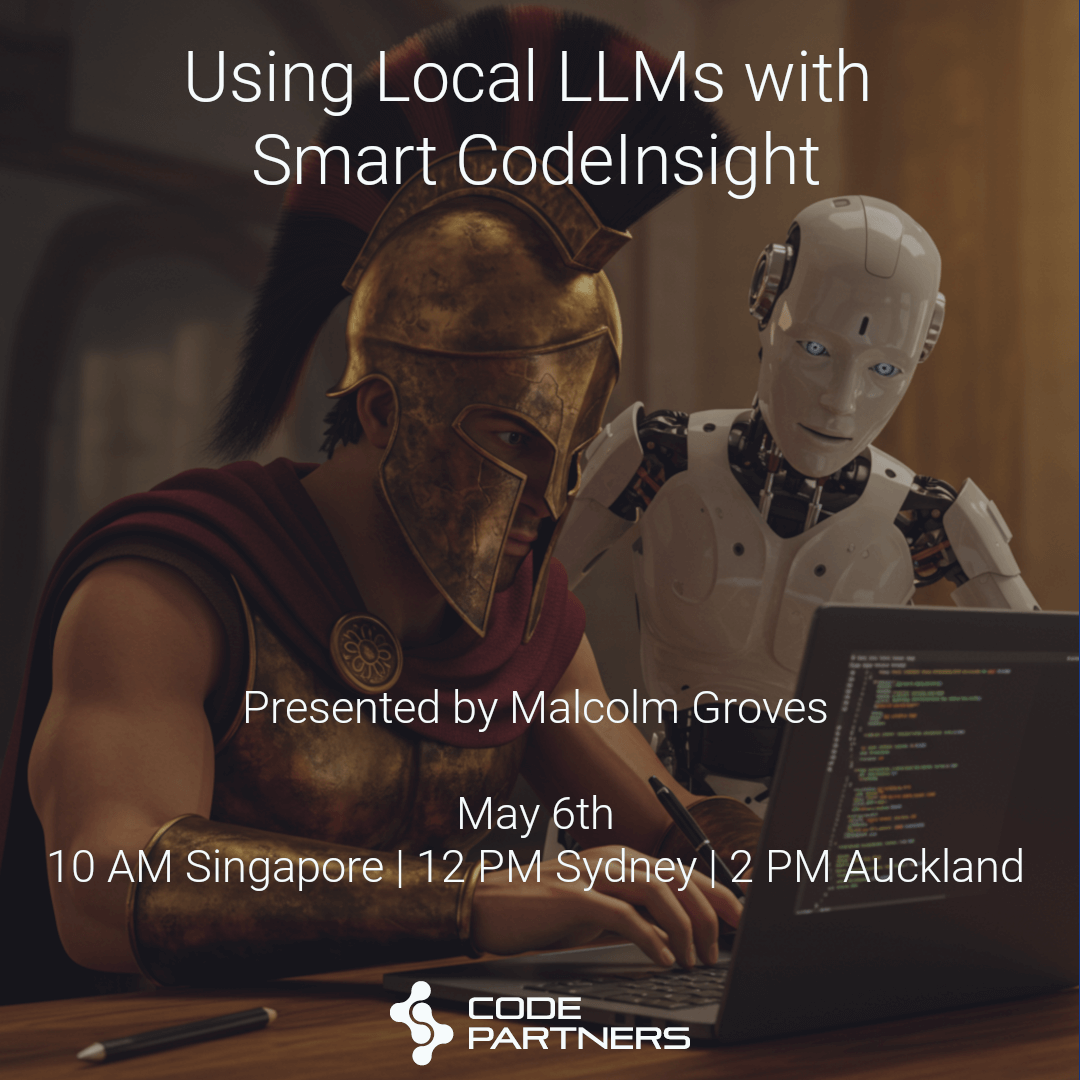

Webinar : Using Local LLMs with Smart CodeInsight

RAD Studio 12.2 introduced Smart CodeInsight, AI-powered coding assistance inside the IDE. One of the great features of Smart CodeInsight is that it can be connected to multiple different LLMs, both Cloud-hosted and Local.

However we have had a number of customers who have not been able to take advantage of Smart CodeInsight, as they are either not willing or not allowed to use a hosted LLM due to IP Protection/Security concerns, and at the same time are not sure how to setup, choose and run a local LLM.

In this informal and practical webinar, Code Partners’ Malcolm Groves will deal with exactly this issue, covering:

-

-

Installing and using Ollama

-

-

-

How to choose the right local LLM for your requirements

-

-

-

Connecting Smart CodeInsight to your local models

-

-

-

Comparing results from different models, including Llama3.2, QwenCoder, Deepseek-Coder and more.

-

The webinar will take place on Tuesday the 6th of May, at:

-

10am Singapore/Kuala Lumpur/Perth -

12pm Sydney/Melbourne -

2pm New Zealand